August 29, 2004 | [Works on HubPages] Data science without domain knowledge: An analysis reveals two salary policies in the AI/ML and Big Data fields

So, does it mean that simply having a massive dataset that covers every aspect of life is the answer? Like ChatGPT-5, 6, 7, or even ∞?

The answer is, unfortunately, NO! Absolutely not!

An Example

Imagine you’re skeptical. Copy the section above and ask ChatGPT to complete it with the prompt, “Complete the following Medium story.” It becomes clear that if you’re under the impression that “in the future, ChatGPT will have enough domain knowledge to replace data scientists/analysts in business analysis,” the story generated by ChatGPT will likely lack conviction.

Through the example, we can see that the core challenge doesn’t lie in domain knowledge but in convincing others to trust your work. This is similar to the ongoing challenge that keeps every entrepreneur up at night — figuring out how to make customers believe in your business. At the heart of this relationship lies the need to distinguish between what the customer wants and what your business provides. You can use a chatbot to help customers better understand your services, but it can’t change their needs. And here’s the irony: sometimes even customers themselves can’t tell you what they truly need! If you’re a data scientist/analyst, this is probably a familiar scenario.

The real breakthrough happens when you learn to flip the situation: instead of trying to convince customers, you must let the data convince you of the shift in customer behavior. That’s when your business starts to run smoothly. Customer trends and behaviors are always changing. At one moment, you may be subconsciously considered the go-to seller, but as soon as those trends shift, if you fail to catch up, you will no longer be that seller in their eyes!

Returning to our initial example, ChatGPT, if data is trully the language of the customer and we can analyze it to understand their evolving behaviors, then it’s these insights that would convince us of its potential to replace data scientists/analysts, rather than simply providing us with redundant expertise across multiple domains.

Stepping Out of the Comfort Zone

Isn’t domain knowledge always seen as a critical criterion when hiring data scientists/analysts?

That’s because it helps create a safe buffer during the current transition period before businesses completely shift from traditional business analytics to fully data science-driven analytics. So, why do businesses feel insecure about hiring data scientists/analysts without domain knowledge?

The answer is: the "lack of scientific validity".

Sounds strange, doesn’t it? Data science is, after all, a science — so why the lack of scientific validity? Hold on, let me explain with a fictional narrative about a real-world analysis. This example will also bring us back to the very title of this story: What can a data scientist/analyst do without domain knowledge?

An Analysis

Keelan is a businessman and the owner of a tech startup. One day, he decided to hire a data scientist to analyze customer behavior data in order to boost the sales of a newly launched product. Keelan headed to Upwork to search for a suitable candidate. After some searching and consideration, he stopped at a candidate and decided to give this one a chance.

Coincidentally, that candidate was me. After reviewing my profile, Keelan reached out to me directly, and admitting that he shared my belief that domain knowledge wasn’t a necessary requirement for a data scientist (I assume the nature of his business contributed to this perspective). However, to show that he had thought his decision through, he asked me to prove my abilities by working with a dataset he had prepared.

The Data

The dataset, named AI/ML Salaries, contains salary information for 1,195 individuals working in the AI/ML and Big Data field from 2020 to 2022. It was uploaded to the Kaggle platform by BryanB under a CC0: Public Domain license. The analysis in this story is based on a version of the dataset uploaded in 2023.

Data column details:

- work_year: The year the salary was paid.

- experience_level: The experience level of the employee during the year, categorized as follows:

— EN: Entry-level / Junior

— MI: Mid-level / Intermediate

— SE: Senior-level / Expert

— EX: Executive-level / Director - employment_type: The type of employment for the role, with possible values:

— PT: Part-time

— FT: Full-time

— CT: Contract

— FL: Freelance - job_title: The role held during the year.

- salary: The total gross salary amount paid.

- salary_currency: The currency of the salary paid (in ISO 4217 format).

- salary_in_usd: The salary converted to USD, based on the average exchange rate of the respective year (via fxdata.foorilla.com).

- employee_residence: The employee’s primary country of residence during the work year (in ISO 3166 format).

- remote_ratio: The proportion of work done remotely, with possible values:

— 0: No remote work (less than 20%)

— 50: Partially remote

— 100: Fully remote (more than 80%) - company_location: The country of the employer’s main office or contracting branch (in ISO 3166 format).

- company_size: The average number of employees at the company during the year:

— S: Less than 50 employees (small)

— M: 50 to 250 employees (medium)

— L: More than 250 employees (large)

Analytical Strategy

Given my complete lack of domain expertise for this type of data, I decided to embark on a knowledge discovery journey, starting with unsupervised learning algorithms — specifically, data clustering. My plan was to generate dozens of different clustering approaches (which I’ll refer to as segmentation strategies) using all the methods and distance metrics I could conceive.

Once I had these diverse clustering results in hand, I would conduct an in-depth analysis of each approach. Ultimately, I aimed to select the clustering result that I deemed most reasonable and present a comprehensive analysis based on it.

The details of my final analysis are as follows (or you can see its Jupyter notebook version here):

Two Salary Policies

The bilateral visualization above is an observation obtained during the Exploratory Data Analysis (EDA) with two clusters that I temporarily call cluster 0 and cluster 1. This chart shows the existence of two salary distributions suggested by these clusters, one distribution having a bimodal shape (cluster 0) and the other having a unimodal shape (cluster 1). The difference in shape between the two distributions is significant while the difference in the two expectations is less than 10% (128,170 for cluster 0 vs. 117,571 for cluster 1), suggesting a sign of macroeconomic equilibrium within these two salary distributions. At this point, I could think of the term “salary policy” to apply to these two distributions. However, to compensate for the lack of domain knowledge, a data scientist must always prioritize caution.

Validating Insights Without Domain Expertise

To increase the validity of the analysis, I proceeded to explore the data insights for each chart in the EDA phase and conducted cross-checks with external sources. There are two readily available reference sources: Google Search and Google Trends. First, I tried searching with keywords like “bimodal pay policy”, “bimodal salary distribution in AI/ML jobs”, “data jobs salary distribution” but it did not help much.

A Shift in 2022

One thing I noticed in this chart is a significant shift in salary policy in 2022. Accordingly, there was a substantial movement (approximately 4% of the total 48% cohort belonging to cluster 0, which represents the bimodal salary policy) from the bimodal salary policy to the unimodal salary policy in 2022. So I continued searching with keywords like “AI breakthough in 2022”, “data jobs decline in 2022”, … and finally I found an analysis on InterviewQuery named The Data Science Job Market is Disappearing. This analysis sheds light on some major fluctuations in the 2022 job market for data analytics-related positions.

A "data scientist" is a luxury while a "data analyst" on the other hand average take home is 30% less. So there's a shift in companies looking for cheaper labor by changing position tiles to more economical conditions.

We saw this earlier in the year when a recruiting client of Interview Query's ended up downgrading their data science manager hire from a $200K salary role to just a $120K data analyst IC role.

— Interview QueryWhat I got with Google Trends was also remarkable:

Results from Google Trends show a sudden increase in searches related to the keyword “Data Analyst” in January 2022. Such fluctuations in the job market can explain the change in the salary policy ratio in 2022.

Data Insights

Once you have evidence to support the external validity of your current segmentation strategy, the next step is to enrich your analysis with data insights. All insights should be more or less geared toward big picture and decision support.

Of the two salary policies applied, the unimodal salary policy is predominant, accounting for 57.8% of the data points.

The bar chart shows the interaction between entry-level positions and executive-level positions, demonstrating the cross-impact of salary policies on these positions. This is reinforced when looking at the change in average salary across experience levels from the unimodal policy to the bimodal policy:

Accordingly, experienced positions show a positive change (from 152,901 to 232,490 for EX and from 128,361 to 168,549 for SE), while the opposite occurs for less experienced positions (from 102,308 down to 73,282 for MI and from 81,665 down to 36,533 for EN).

The cross-impact of salary policies can also be seen when conducting exploratory analysis related to company sizes.

Accordingly, the interaction between large companies and small companies can be partially explained through the non-homogeneous distribution of personnel across experience levels in these two types of companies. Small companies have a larger proportion of personnel at entry and mid-level experience (totaling 67%), while large companies have a higher concentration of personnel at mid and senior experience levels (totaling 77%).

The unimodal salary policy is applied to remote jobs at a higher rate than to non-remote jobs.

Key Takeaways

Market perspective:

- There are at least two pay policies in the AI/ML job market. One has a unimodal distribution while the other has a bimodal distribution.

- The expectation of the bimodal distribution is $128,170, a little bit higher than the expectation of the unimodal distribution, $117,571.

- The unimodal pay policy is the most popular, with 57.82% of all data entries.

- There is a sharp shift from bimodal to unimodal pay policy in 2022, causing the proportion of bimodal pay policy to drop sharply from 48.01% in 2021 to 40.2% in 2022. Interview Query’s analyses also shows that there were major fluctuations in the job market during this period.

Employee perspective:

- Experienced employees will benefit most from the bimodal pay policy, while mid-level and lower-level employees will benefit most from the unimodal pay policy.

- Employees working at medium-sized companies or doing remote work are less likely to be affected by the bimodal pay policy than those working at large and small companies or doing non-remote work.

Company perspective:

- Although disproportionately affected by the bimodal pay policy, small companies seem to have an advantage in attracting entry-level employees. Therefore, instead of just paying low wages to these employees, small companies can invest in long-term strategies that foster loyalty and strengthen the bond between employees and the company.

I sent Keelan the analysis and waited for his response.

"Lack of Scientific Validity"

Surprisingly, after I sent the analysis to Keelan at 10 AM, I received a response just half an hour later. A thought crossed my mind: “Has he prepared a common email to respond to all candidates?” I quickly read through the email and it didn’t take long to imagine the challenge I was facing. The content of the email was as follows:

Hello Thien An,

Before receiving your analysis, I had read dozens of analyses from previous candidates, most of whom applied clustering techniques and consequently produced nearly 20 different clustering methods. I must admit that your analysis is very convincing and different from the rest. However, having more than 20 different clustering methods for the same data, each with its own convincing rationale, has raised questions for me about the validity of analyses based on unsupervised clustering.

And by the way, I want to remind you of a classic example of the situation we’re facing here. You must know how the total revenue generated by arcades relate to the total number of computer science doctorates awarded in the US from 2000 to 2009, right? Or how the total number of pirates relates to the global average temperature in worldwide from 1820 to 2000? The question here is how can we believe that the results about the validity of these analyses won’t be one of these false findings if we don’t use domain knowledge?

Intuition always gives us faith in a type of data science so powerful that it serves as a foundation for domain knowledge rather than the other way around, and personally, I always feel lucky to work with data scientists who share this belief. I personally always believe that there is a way to achieve validity in science and apply it even to unsupervised clustering algorithms. Could you help me with such a method to verify the conclusions in this analysis?

Regards,

Keelan

Scientific Validity

A demand for scientific validity? This reminded me that even in an online course on MIT xPro called ‘Data Science and Big Data Analytics: Making Data-Driven Decisions’, a non-specialized course but designed to have a broad coverage of key knowledge related to data science, the existence of such a method wasn’t mentioned when introducing the validity of data clustering methods. However, this is the issue that a data scientist must consider first if they want to start their career as a data scientist without domain knowledge. Indeed, Keelan and I had found a common ground here!

The reason why a scientific validation method for clustering still doesn’t exist, at least until the time this story is published, is due to the inconsistency in defining what a “cluster” is.

Yes, you heard that right. We still haven't defined what a cluster is while continuously publishing clustering algorithms and analyses about them!

In fact, this is the most effective way we have so far to better understand what a “cluster” means. Accordingly, each method will address an intuitive aspect of this concept. One problem is that as the dimensionality of the data increases, the distance functions used to evaluate clusters also lose their usefulness due to a phenomenon called the curse of dimensionality. To solve this problem of distance functions, scientists have had to use topological concepts with the birth of the t-SNE algorithm, achieving many positive results in supporting clustering that have made it one of the most popular algorithms at present.

However, the challenge doesn’t stop there. Ironically, even when all clusters are described on a two-dimensional Euclidean space, we still have difficulty defining a cluster. And this leads to the emergence of fuzzy clustering algorithms, density-based clustering, and so on.

At present, there are some new directions in this field aimed at leveraging advances in explainable artificial intelligence, typically the use of machine learning models in clustering algorithms. However, as long as we haven’t solved a scientific validation method for the clustering problem, we still can’t have a so-called science-based clustering method, and our efforts on this issue will continue to diverge!

Scientific Validation Method for Clustering

Hello Keelan,

I think I have mentioned such a method in my analysis. Please allow me to explain here.

I will end my story with the content of the response email I sent to Keelan.

Such a method can be constructed based on the hypothesis of a structure called the Platonic structure of Cohesive-Convergence Groups (CCGs).

- Deciphering Cohesive-Convergence Groups in Neural Network Optimization.

While the excessive complexity of neural networks makes them effective supervised learning manifolds, it also makes studying these learned manifolds difficult. This story will help solve this problem by using cohesive convergence groups in neural net

Accordingly, the structure of CCGs that emerges during the training of a neural network with ideal generalization capability will be unique, and this structure also contains information about the clusters. The scientific method for evaluating clusters is built on this structure in a way similar to the A/B testing method. The steps are as follows:

- Initialize a powerful neural network.

- Conduct training and sample the cohesive values between pairs of data points.

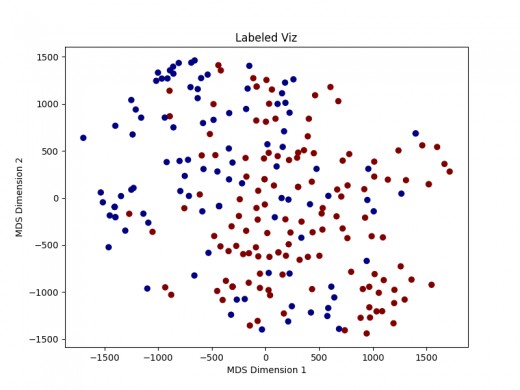

- Project the results onto a two-dimensional space using the Multidimensional scaling algorithm (the result will be similar to the chart below).

- Qualitatively evaluate the results and save in binary form. Accordingly, 1 is for noticeable cluster-based polarization and 0 for no noticeable cluster-based polarization.

- Repeat the above steps according to the predetermined number of samples and conduct A/B testing to conclude whether there is cluster-based polarization.

A positive result from this test will be the basis for a dual hypothesis about both the ideal structure of CCGs and the existence of the given clustering based on CCGs.

Additionally, regarding the resources needed for evaluating this clustering strategy, I estimate it will take less than 7 minutes and 30 seconds for each sampling by training a completely newly initialized neural network (the training machine uses an Intel(R) Core(TM) i7–7700HQ CPU @ 2.80GHz and 2GB RAM). The entire sampling process will take less than 225 minutes (3 hours and 45 minutes) based on the standard of 30 samples. Sample evaluation can use external sources at a cost of $5 to $7 for every 30 samples.

The issue of membership for each data point can be resolved using traditional methods (extended from the analysis) with the assurance from the data scientist who performed the clustering algorithm (which is me in this case) that there are no overlapping sub-clusters within the previously separated clusters.

Conclusion

The hypothesis that the clustering used in the analysis is purely random can be easily rejected with a ratio of around 21/30 (70%). The analysis results also reflect the coherence in the structure of each cluster and show the reliability of the analysis in the context of the given data set.

The power of data science lies in its ability to analyze complex datasets and extract knowledge - even when starting from zero.

This real-world analysis shows that data scientists/analysts don’t need domain knowledge to produce valuable insights. With the right data, of course, and a scientific approach, even an outsider can uncover trends and help drive decision-making. The key is to stay curious, use data as your guide, and validate your findings rigorously. Then, the safe zone for your analyses will be the data itself.

Enjoying my work?

Your encouragement means a lot to me — and it even gets me a cup of coffee! ☕ If you’d like to support my work, just click the link below.

Comments

Post a Comment